Optimal placements and unsolved math problems are among the interesting problems discussed in the book, Tomorrow’s Math, Second Edition by C. Stanley Ogilvy. On chapter two, pg.23-24 he asks how a land’s defenses may be best defended by n defense stations on a disk shaped land area? They state it had known answers for n<6, and “a general solution seems remote at present”.

Variations upon the problem include the “Facility location problem” where the distance from stations to any point is minimized, and drone based base station for communication is a similar consideration.

Let’s see if we can make an iterative solution to find optimal locations starting with points that you choose – finding a local minimum where the maximal distance from stations is low.

I started with a Matplotlib example where you can choose points (within the unit square (0,0) to (1,1) by default. To this example I added a function to go through hundreds of points to find the distance from one of the stations. You would think you could do this easily with two for loops of range(0,1,0.01) to go through each in the unit square. However you will get this error:

TypeError: 'float' object cannot be interpreted as an integerPython does not allow this for fractions, but a workaround is the Numpy arange, which behaves the way you would want the Python range function to in this case. In fact there are a lot of Numpy functions that we will prefer to the Python builtins in some cases in this project.

import time

import numpy as np

import matplotlib.pyplot as plt

from time import sleep

def tellme(s):

print(s)

plt.title(s, fontsize=16)

plt.draw()

def greatestKDist(pts):

maxdist = 0 #Start 0 and increase...

maxpt = []

for x in np.arange(0,1,0.01):

for y in np.arange(0,1,0.01):

closeone = 1E99 # 10^99 is large, decrease this to the closest nearby station distance.

#What is closest?

for [ptx, pty] in pts:

dist = np.sqrt( np.square(x-ptx) + np.square(y-pty) )

if dist < closeone:

closeone = dist

if( closeone > maxdist ):

#This point is currently the max closest distance found.

maxdist = closeone

maxpt = [x,y]

#print("max pt, dist:")

#print(maxpt)

return maxdist

n = int(input("How many stations?"))

plt.figure()

plt.xlim(0, 1)

plt.ylim(0, 1)

tellme('Choose beginning points')

while True:

pts = []

while len(pts) < n:

pts = np.asarray(plt.ginput(n, timeout=-1))

if len(pts) < n:

tellme('Too few points, starting over')

time.sleep(1) # Wait a second

print(pts)

print( "max distance from station is %s" % (greatestKDist(pts),) )

After some adjustments to generate the above function one can test that this finds maximal-distance points from the n points you select. Now to add the optimization to find better solutions…

Optimizing solutions

As you may remember from calculus class or in learning about machine learning, finding a local minimum is done by going down the slope downward – as in real life, you can get to a stream or quite possibly a village if you get lost. 🙂 You could use Newton’s method to find a minimum, but just going the right direction and going slower and slower as we go on, should work on a computer where we can run hundreds of calculations a second. To optimize, I try the calculation greatestKdist(pts) for the points with some minor change up, down, left or right – which can be described as adding one of [1,0],[-1,0],[0,1], or [0,-1] times some adjustment distance length:

#Adjust until optimal:

directions = [[1,0],[-1,0],[0,1],[0,-1]]

adjustment = 0.1

while adjustment > 0.001:

changes = 0

for i in range(len(pts)): #Each point

#What direction should this go in?

for direction in directions:

#If we say tmp = pts then it would change when changing it. make a copy.

tmp = [x for x in pts]

# array + array appends them using python "+", so use add:

# (Python * doesn't multiply arr * float

tmp[i] = np.add(tmp[i], np.multiply(direction,adjustment))

if( greatestKDist(pts) > greatestKDist(tmp) ):

#Found a more optimal one.

print("%0.4lf %0.4lf should move %s,%s to %0.4lf,%0.4lf" %

(pts[i][0], pts[i][1], direction[0]*adjustment,direction[1]*adjustment, tmp[i][0], tmp[i][1] ))

pts[i] = tmp[i]

#changed the actual position to a better one.

changes += 1

print('changed %s positions' % (changes,) )

plt.clf() #Clear previous

# Get x and y with p[0] and p[1] of each [x,y] point, plot it with linewidth=0, no line:

plt.plot( [p[0] for p in pts], [p[1] for p in pts], color='green', marker='o', lw='0')

plt.xlim(0, 1)

plt.ylim(0, 1)

plt.pause(0.1) # render to screen

Pulling this all together, and the making the adjustment lower in each case that it cannot do further optimization to lower the greatestKDist(pts), we have this full script that can be run to find the max-distance and max distance once moving the points to find an optimal pattern. So have we found a “general solution” as the book describes?

import time

import numpy as np

import matplotlib.pyplot as plt

from time import sleep

def tellme(s):

print(s)

plt.title(s, fontsize=16)

plt.draw()

def greatestKDist(pts):

maxdist = 0 #Start 0 and increase...

maxpt = []

for x in np.arange(0,1,0.01):

for y in np.arange(0,1,0.01):

closeone = 1E99 # 10^99 is large, decrease this to the closest nearby station distance.

#What is closest?

for [ptx, pty] in pts:

dist = np.sqrt( np.square(x-ptx) + np.square(y-pty) )

if dist < closeone:

closeone = dist

if( closeone > maxdist ):

#This point is currently the max closest distance found.

maxdist = closeone

maxpt = [x,y]

#print("max pt, dist:")

#print(maxpt)

return maxdist

n = int(input("How many stations?"))

plt.ion()

plt.figure()

plt.xlim(0, 1)

plt.ylim(0, 1)

plt.show()

tellme('Choose beginning points')

while True:

pts = []

while len(pts) < n:

pts = np.asarray(plt.ginput(n, timeout=-1))

if len(pts) < n:

tellme('Too few points, starting over')

time.sleep(1) # Wait a second

print(pts)

print( "max distance from station is %s" % (greatestKDist(pts),) )

sleep(1)

#Adjust until optimal:

directions = [[1,0],[-1,0],[0,1],[0,-1]]

adjustment = 0.1

while adjustment > 0.001:

changes = 0

for i in range(len(pts)): #Each point

#What direction should this go in?

for direction in directions:

#If we say tmp = pts then it would change when changing it. make a copy.

tmp = [x for x in pts]

# array + array appends them using python "+", so use add:

# (Python * doesn't multiply arr * float

tmp[i] = np.add(tmp[i], np.multiply(direction,adjustment))

if( greatestKDist(pts) > greatestKDist(tmp) ):

#Found a more optimal one.

print("%0.4lf %0.4lf should move %s,%s to %0.4lf,%0.4lf" %

(pts[i][0], pts[i][1], direction[0]*adjustment,direction[1]*adjustment, tmp[i][0], tmp[i][1] ))

pts[i] = tmp[i]

#changed the actual position to a better one.

changes += 1

print('changed %s positions' % (changes,) )

plt.clf() #Clear previous

# Get x and y with p[0] and p[1] of each [x,y] point, plot it with linewidth=0, no line:

plt.plot( [p[0] for p in pts], [p[1] for p in pts], color='green', marker='o', lw='0')

plt.xlim(0, 1)

plt.ylim(0, 1)

plt.pause(0.1) # render to screen

if changes == 0:

adjustment = adjustment/2

print( "max distance from station is %s" % (greatestKDist(pts),) )

if plt.waitforbuttonpress():

break

Experimenting

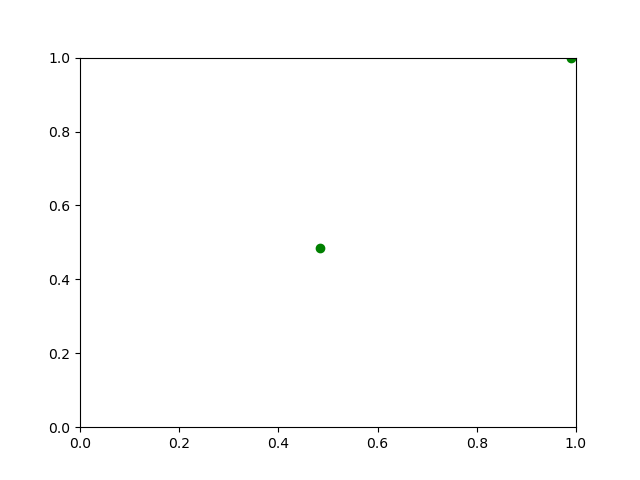

Run the given code, giving it a “1” and selecting any point, will give the center of the chart which is not surprising, being the optimal point for a station. Selecting two and clicking two around the middle and it will space them somewhat evently within the chart, but not always the most optimal way…

Now by clicking various different starting points, we can find some interesting optimizations and non optimizations. Clicking 4 points at about (0,0) produces just one going to the middle and staying there. In fact, selecting to choose two points and placing them near (0,0) or any other corner, makes it adjust one dot to the center and then make no other change.

Two points, when placed at a corner, will be optimized by this code to one central station and one stuck at the corner – because the greatest distance to a station will be about half the distance across, no matter where that corner one moves to nearby. This represents a local minima, one that can’t be moved out of without moving both the points some distance, which this particular algorithm doesn’t do.

This is why various machine learning algorithms must be adjusted and retried with random possibilities to find possible better solutions! There has been much written on the subject in various cases in which using randomness can add accuracy – also in neural nets and other machine learning algorithms.

This all goes to show that there still may be a way that seems right, but unless there is a mathematically rigorous proof then it doesn’t entirely hold. That is what they meant by proving a minimum for a given area for n>5 points.

Github link: here.